🕵️♀️ Agents

AI agents are LLM applications designed to perform tasks independently or alongside other AIs and humans. They range from simple functions like web searches to complex ones like building web applications and conducting research. Here you find frameworks for building agents, platforms for deploying and hosting them, and tools for orchestrating agent workflows.

27 tools

Open-source agent development kit from Google for building multi-agent systems

TypeScript-first AI framework for building agents, RAG pipelines, and workflows

Framework for building stateful AI agents with persistent memory, formerly MemGPT

Open-source cloud sandboxes for running AI-generated code securely in Firecracker microVMs

Agents overview

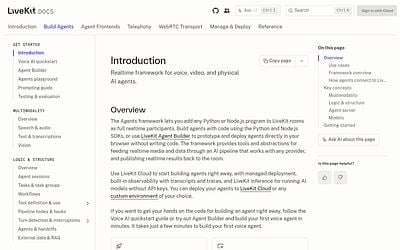

AI agent platforms and tools span the full lifecycle from building to deploying autonomous LLM applications. Agent frameworks provide the SDKs and abstractions for creating agents that can reason, plan, and take actions using external tools. Agent hosting and deployment platforms handle the infrastructure side, running agents 24/7 on dedicated compute with browser automation, messaging integrations, and monitoring built in.

The agent ecosystem is evolving rapidly. Platforms range from low-code agent builders to developer-focused SDKs that give fine-grained control over planning, tool selection, and safety guardrails. Multi-agent architectures, where specialized agents collaborate on different aspects of a task, are becoming a common pattern for complex workflows. Managed agent platforms are emerging to handle the operational complexity of running agents in production.

Key considerations when choosing agent tooling include whether you need a framework to build custom agents or a platform to deploy pre-built ones, supported tool integrations, planning and reasoning capabilities, safety controls, observability into agent decision-making, and the ability to define custom workflows and fallback behaviors.

Frequently Asked Questions

What is an AI agent?

An AI agent is an LLM application that can autonomously plan and execute multi-step tasks. Unlike a chatbot that responds to single prompts, an agent can use tools (APIs, code execution, web browsing), maintain state across steps, and iterate until it achieves a defined goal.

What is the difference between an agent framework and an agent platform?

An agent framework (like CrewAI or Mastra) gives you code-level building blocks to create custom agents. An agent platform (like PrivateClawd) provides managed infrastructure to deploy and run agents in production, handling hosting, monitoring, and integrations. Some teams use both: a framework to build the agent and a platform to run it.

When should I build an agent instead of a simple LLM chain?

Use agents when the task requires dynamic decision-making: when the number of steps is not known in advance, when tool selection depends on intermediate results, or when the system needs to handle errors and retry with different approaches. For predictable, fixed-step workflows, a simple chain is more reliable and easier to debug.

How do I make agents reliable in production?

Set clear boundaries on what tools the agent can access, implement timeout and retry limits, add human-in-the-loop checkpoints for high-stakes actions, log every decision for debugging, and use evaluation frameworks to test agent behavior across diverse scenarios. Start with narrow, well-defined tasks before expanding scope.

Is your product missing?