📊 Observability & Analytics

Specialized DevOps tools tailored for optimizing LLMs: from tuning parameters to enhance task-specific performance to analytics for monitoring and refining LLM applications.

32 tools

LLM Observability 17

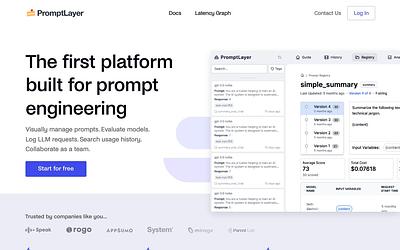

Open-source prompt management, evaluation, and observability for LLM apps

Comet provides an end-to-end model evaluation platform for AI developers.

Traces, evals, prompt management and metrics to debug and improve your LLM application.

LLM Evaluation 11

Open-source ML and LLM evaluation with 100+ built-in metrics and CI/CD integration

Other 4

Observability & Analytics overview

Observability and analytics tools for AI infrastructure give engineering teams deep visibility into how their LLM applications perform in production. These platforms go beyond traditional APM by tracking model-specific metrics like token usage, latency per request, prompt/completion quality, and cost attribution across providers.

Whether you are running a single model or orchestrating multi-step agent workflows, observability tools help you identify regressions, debug unexpected outputs, and optimize spend. Many integrate directly with popular frameworks like LangChain, LlamaIndex, and OpenAI SDKs, making instrumentation straightforward.

The tools in this category range from full-stack LLM platforms with built-in evaluation suites to lightweight logging libraries. Some focus on real-time monitoring dashboards, while others emphasize offline analysis and dataset curation for fine-tuning.

Related stacks

See how observability & analytics tools fit into a full infrastructure stack.

Frequently Asked Questions

What is LLM observability?

LLM observability is the practice of collecting, analyzing, and acting on data from large language model applications in production. It covers token-level tracing, latency monitoring, cost tracking, and output quality evaluation, giving teams the insight needed to debug issues and improve model performance over time.

How is AI observability different from traditional APM?

Traditional APM tracks request latency, error rates, and throughput. AI observability adds model-specific dimensions: prompt/completion pairs, token counts, embedding similarity scores, hallucination detection, and per-provider cost breakdowns. It also handles non-deterministic outputs, which standard monitoring tools are not designed for.

Do I need observability if I only use the OpenAI API?

Yes. Even with a single provider, observability helps you track costs, catch quality regressions after model updates, debug prompt failures, and understand usage patterns. It becomes even more valuable when you add caching, fallback providers, or fine-tuned models.

What should I look for in an LLM observability tool?

Key capabilities include trace-level logging of prompts and completions, cost and latency dashboards, integration with your LLM framework, evaluation and scoring features, and the ability to export datasets for fine-tuning. Open-source options offer flexibility, while managed platforms reduce operational overhead.

Is your product missing?