deepinfra

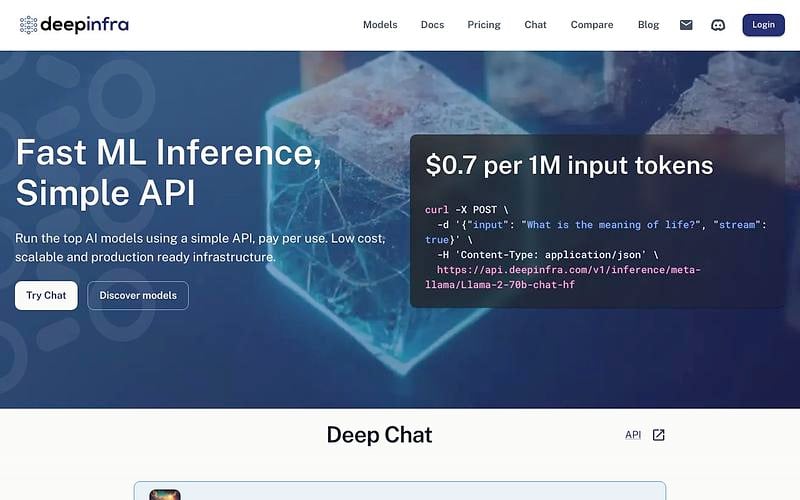

Run the top AI models using a simple API, pay per use. Low cost, scalable and production ready infrastructure.

DeepInfra hosts roughly 77 open-weight models as serverless API endpoints, including current open-source frontier models (Kimi K2 family, Qwen3.5 family, GLM-5, DeepSeek V3.2, gpt-oss-120B, MiniMax-M2, NVIDIA Nemotron, Meta Llama 3.x and 4.x). Beyond text generation it supports embeddings, image generation (Flux 3), text-to-speech, and speech recognition.

The API is OpenAI-compatible, so switching from OpenAI usually means changing a base URL and API key. Per-token pricing is among the lower end of the market on Artificial Analysis as of April 2026, with gpt-oss-120B listed around $0.08 per 1M blended tokens. DeepInfra runs its own US-based infrastructure including NVIDIA Blackwell B200 systems.

Pricing: Per token usage

What is DeepInfra?

DeepInfra is a serverless inference platform that hosts open-weight AI models as API endpoints. You send requests to an OpenAI-compatible API and pay per token, with no infrastructure setup or management required.

Supported Models

DeepInfra hosts roughly 77 open-weight models. Model families include Meta Llama (3.1, 3.2, 4 Scout, 4 Maverick with up to 1M token context), DeepSeek V3, Qwen 2.5 and 3, NVIDIA Nemotron, Mistral, Google Gemma, GLM-5, and Kimi K2.5. Beyond text generation, DeepInfra supports embedding models (BGE-M3), image generation (Flux 3 at $0.07/image), text-to-speech (Chatterbox), and speech recognition. 65 of the 77 models support function calling, and 30 are reasoning models.

API and Integration

The API is compatible with the OpenAI client libraries. If you are already using the OpenAI SDK, switching to DeepInfra requires changing the base URL and API key. The platform supports streaming responses, function calling, JSON mode, and structured output. Framework integrations include LangChain, LlamaIndex, AutoGen, and Vercel AI SDK. Custom model deployment and LoRA adapter support are available for both text and image models.

Infrastructure and Performance

DeepInfra runs on its own US-based infrastructure, including NVIDIA Blackwell HGX B200 systems. The optimization stack includes TensorRT-LLM, speculative decoding, multi-token prediction, and KV-cache-aware routing. Top models reach 200-317 tokens per second output speed with time-to-first-token as low as 0.35 seconds. MoE models on Blackwell with NVFP4 quantization achieve up to 20x cost reduction compared to dense models on older hardware.

Pricing

DeepInfra uses per-token pricing with rates varying by model. Prices range from $0.02 per million tokens (Llama 3.2 3B) to around $1.50 per million tokens for larger models. There are no minimum commitments and you only pay for what you use. Automatic tier progression reduces costs as spending increases. The platform holds SOC 2, ISO 27001, GDPR, and HIPAA compliance certifications.

Who Should Use DeepInfra?

DeepInfra is a good fit for teams that want to use open-weight models without managing GPU infrastructure. The OpenAI-compatible API makes it easy to switch from OpenAI or test multiple open-source models. The Blackwell infrastructure gives it a performance and cost edge for large-scale workloads. It is particularly useful for cost-sensitive production use cases where open-weight models perform well enough for the task.

deepinfra Alternatives

Explore 67 products in the Inference APIs category. View all deepinfra alternatives.

Packet.ai

On-demand NVIDIA Blackwell GPU cloud with per-second billing, SSH, CLI, and an OpenAI-compatible inference API

Compare

Is your product missing?