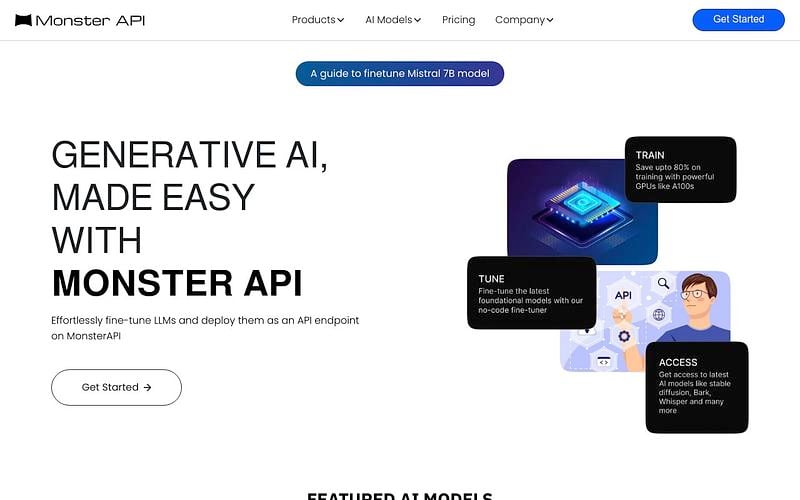

Monster API

Access, finetune, deploy LLMs using our affordable and scalable APIs.

MonsterAPI is an AI computing platform designed to help developers build Generative AI-powered applications using no-code and cost-effective tooling. The platform is powered by a state-of-the-art ingenious Decentralised GPU cloud built from the ground up to serve machine learning workloads in the most affordable and scalable way.

It provides access to pre-hosted AI APIs like Stable Diffusion XL and Whisper Large-v2, along with several LLMs like Llama2, Zephyr, Falcon. Developers can fine-tune and deploy these models using cURL, PyPI, and NodeJS clients, benefiting from significant cost savings.

Pricing: Usage-based

Resources

Monster API Alternatives

Explore 65 products in the Inference APIs category. View all Monster API alternatives.

Also listed in

Is your product missing?