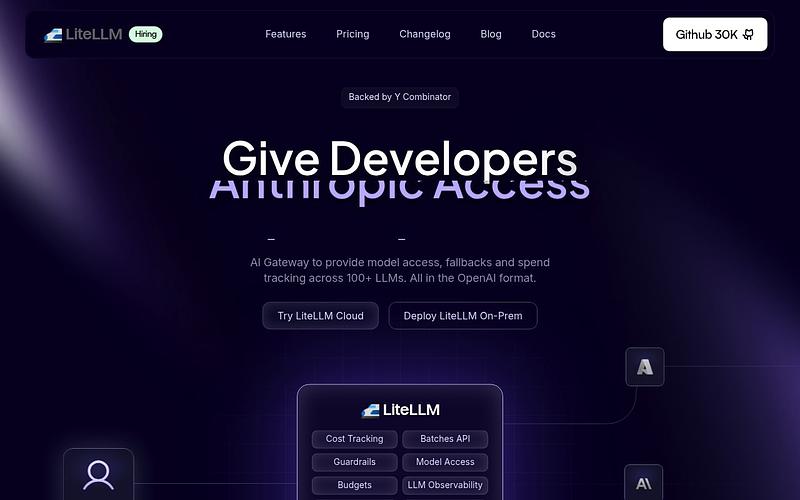

LiteLLM

Unified OpenAI-compatible proxy for 100+ LLM providers with cost tracking and load balancing

LiteLLM is a Python SDK and proxy server that provides a single OpenAI-compatible interface to call over 100 LLM APIs, including OpenAI, Anthropic, Azure, Bedrock, Vertex AI, Cohere, and Ollama. It handles cost tracking, budget management, virtual API keys, guardrails, and load balancing across deployments. The proxy can handle 1,500+ requests per second. The core SDK and proxy are open source under MIT, with enterprise features available for teams needing SSO and advanced auth.

Pricing: Free / enterprise

LiteLLM Alternatives

Explore 25 products in the Frameworks & Stacks category. View all LiteLLM alternatives.

GPT4All

Desktop app and Python SDK for running open-source LLMs locally on any device

Jan

Open-source desktop app for running LLMs locally with a clean GUI

llama.cpp

LLM inference in C/C++ with broad hardware support and aggressive quantization

Google ADK

Open-source agent development kit from Google for building multi-agent systems

Is your product missing?