Beam

Open Source

Free Trial

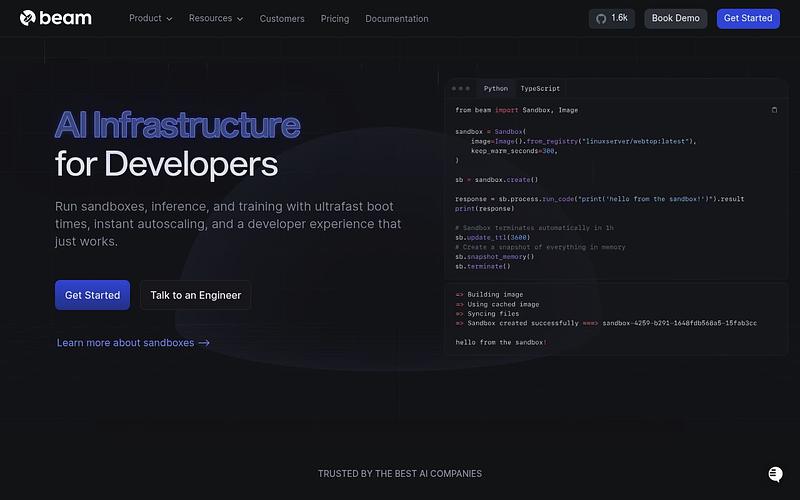

Open-source serverless GPU cloud with sub-second cold starts and auto-scaling

Beam is a serverless cloud platform for AI inference, sandboxes, and background jobs. It provides sub-second cold starts via checkpoint restore, auto-scaling to thousands of instances, and persistent sandboxes. Supports Python, Node.js, and arbitrary Docker images with built-in task queues, cron jobs, and web endpoints. Powered by Beta9, an open-source GPU cloud engine that can be self-hosted. A100s and H100s start at around $1.35/hr with per-second billing.

Pricing: Pay-as-you-go

Hosting

Cloud + Self-hosted

Pricing

Usage Based, from $0.15/hr (T4 GPU)

HQ

🇺🇸 United States

Founded

2021

GitHub

1,654 stars

Is your product missing?