LLMWise

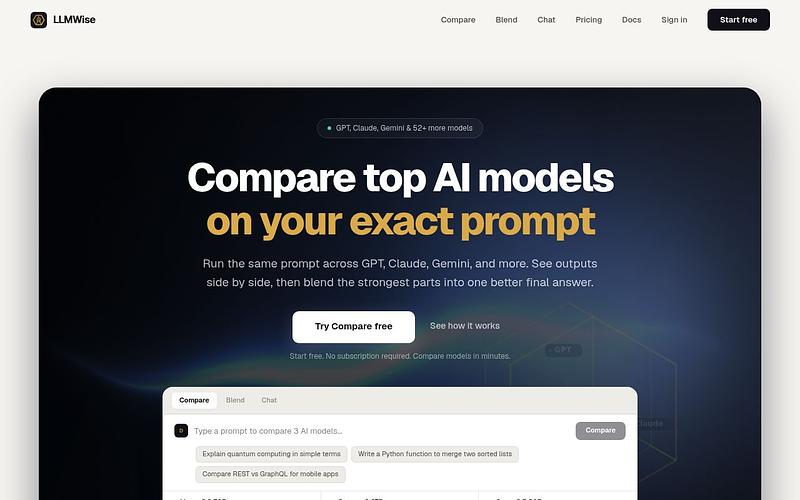

Multi-LLM API orchestration platform for comparing and blending AI models

LLMWise provides a unified API to access 31+ LLM models including GPT, Claude, Gemini, DeepSeek, and Llama. Compare models on the same prompt and blend outputs into a single answer. Credit-based pricing with a free tier.

What is LLMWise?

LLMWise is an API gateway and orchestration layer for large language models. It provides a single API to access 62+ models from 20+ providers (OpenAI, Anthropic, Google, Meta, xAI, DeepSeek, and others). Beyond basic routing, it adds comparison, blending, and judging capabilities on top.

How It Works

LLMWise operates through several modes. Chat mode sends a prompt to a single model. Compare mode runs the same prompt across up to 9 models simultaneously, streaming responses back with per-model latency, token counts, and cost. Blend mode sends a prompt to multiple models and synthesizes the strongest elements into a single response, supporting strategies like Consensus, Council, and Mixture of Agents (MoA). Judge mode lets one model evaluate and rank the outputs of others. A failover mesh mode adds circuit breakers that detect unhealthy providers and reroute automatically.

Key Features

The platform supports BYOK (Bring Your Own Keys), letting users plug in their own API keys for OpenAI, Anthropic, and Google. Keys are encrypted at rest. Smart routing automatically matches prompts to optimal models based on the task type. Official SDKs are available for Python and TypeScript, with framework integrations for LangChain, Next.js, and React.

Pricing

LLMWise uses a credit-based, pay-as-you-go model with no subscription required. A free tier provides 20 credits (no card required). Paid tiers start at $3 for 300 credits (Starter), $10 for 1,100 credits (Standard), and $25 for 3,000 credits (Power). Chat costs 1 credit per request, Compare costs 3, Blend costs 4, and Judge costs 5. Credits are reserved per request and settled to actual token usage afterward. Paid credits never expire.

Who Should Use LLMWise?

LLMWise is a good fit for teams that want to evaluate which model performs best for specific use cases without managing multiple provider integrations. The compare and blend features are useful for applications where output quality matters more than raw speed. Note that the API uses a native format (not OpenAI-compatible), so it is not a drop-in replacement. The product launched in February 2026, so it has a limited track record.

LLMWise Alternatives

Explore 67 products in the Inference APIs category. View all LLMWise alternatives.

Packet.ai

On-demand NVIDIA Blackwell GPU cloud with per-second billing, SSH, CLI, and an OpenAI-compatible inference API

Is your product missing?